Raku RSS Feeds (individual feeds | subscribe to all via Atom)

Elizabeth Mattijsen (Libera: lizmat #raku) / 2026-06-09T16:55:25Featured image attribution: “1967–popular science autogyro” by James Vaughan, CC BY-NC-SA 2.0

It is just over 12 days before the start of The Perl and Raku Conference 2026 in Greenville, SC, USA. See https://tprc.us for details.

Weekly Challenge #377 is available for your scrutiny.

Elizabeth Mattijsen has raised the issue of “No Macro Support in Raku 6.e“. This has surfaced some of the original RakuAST project goals and the various forays into macro prototypes such as 007 and ALMA (author Carl Mäsak). I share the opening lines of the Bond pastiche in the spirit of -Ofun.

Prague, Czech Republic. Around two o’clock at night.

A single car pulls up to the section headquarters. Section chief JOHN DRYDEN gets out and looks around him before getting into the empty building.

He takes one of the glass elevators up to his office. A silence hangs over the elevator as it rises up to his floor. The England-born station chief is wearing a western suit and tie; only the fur cap gives him a slightly Slavic look. An LED digital display quietly counts up the floor numbers: 3, 4, 5, 6…

The upper floor is all polished steel and glass. Dryden’s footsteps are the only sounds heard as he heads to his office.

He opens the door. The office is veiled in darkness. Some light from the street is coming in from the outside, casting a few stark shadows. Dryden walks across the room, takes off his ushanka and places it on his desk, turns on a desk light, and… stops, eyes wide.

Someone is already in the office.

A very good intro to the purpose of RakuAST (and Macros) is this 2020 paper by Jonathan Worthington – RakuAST: a foundation for Raku macros.

Anton Antonov serves up two scoops of Erdős unit distance conjecture examples … great for the math geeks and OpenAI LLM followers:

I continue to build out the Slangify website … and to make the case for (Raku) DSLs to fix some of the shortcomings of LLMs in structured workflows.

This week, let’s take a look at Raku twigils – this notion follows on from the more familiar sigils which are the $, @, % and & characters at the start of a variable name and denote what that variable contains.

twigil is the cute name for a second character, for example . (dot) or ! (exclamation point) that immediately follow the sigil. Here they are in action:

class Person {

has $.name; #'.' dot twigil denotes public

has $.age;

method age-group { $!age >= 18 ?? 'an adult' !! 'a child' }

method age-check { "$.name is $.age-group" }

}

my $p = Person.new(name => 'Nick', age => 42);

say $p.age-check; #Nick is an adult.

say $p.age; #42

Raku has a powerful Object-Oriented (OO) model, yet it uses friendly keywords – has, is, does – that makes it easy to convey code intent with a human feel. Raku OO supports both a “Python-like” approach for ease of use / convenience and a “Java-like” approach for strict encapsulation supporting design principles like SOLID. Our first example above uses the . (dot) twigil to denote a public attribute – simple.

Now let’s tighten that up and keep the age private to the class.

class Person {

has $.name; #'.' dot twigil denotes public

has $!age is built; #'!' exclamation twigil denotes private

method age-group { $!age >= 18 ?? 'an adult' !! 'a child' }

method age-check { "$.name is $.age-group" }

}

my $p = Person.new(name => 'Nick', age => 42);

say $p.age-check; #Nick is an adult.

say $p.age; #No such method 'age' for invocant of type 'Person'...

Sure enough, that throws an exception if external code tries to access the private attribute. Note how these attributes are used inside the class scope – $.name and $!age respectively. More on this next weel. That needed just two changes:

$.age to $!ageis built traitWhat is going on? Check out this third example, just focusing on the $!age attribute:

class Person {

has $!age; #'!' exclamation twigil denotes private

multi method age { $!age } #getter

multi method age($x) { $!age = $x } #setter

}

my $p = Person.new;

$p.age: 42;

say $p.age; #42

This new Person class shows literally what the . (dot) twigil is doing … it is a shorthand that instructs the compiler to make boilerplate setter and getter methods – accessors. Otherwise, the ! exclamation defaults to a private attribute model – no accessors mean that the variable can only be accessed from within the class scope. Other notable things:

$p.age must be explicitly loaded, private attributes cannot even be used in the .new constructor (unless you apply the is built trait as show in example 2).$p.age: 42; method call syntax – you cannot assign a value with =Your contribution is welcome, please make a gist and share via the #raku channel on IRC or Discord.

A very interesting return to the Macros topic – reminding us of the original aims of RakuAST. This has been an amazing team effort over multiple years and a sensible proposal to ship RakuAST in 6.e and (hopefully) restart the Macro work to be ported over in module land so as not to delay the next major release.

Please keep staying safe and healthy, and keep up the good work! Even after week 71 of hopefully only 209.

~librasteve

Previous < = > Next

In the last post, the making of the Slangify website was described. Since then a shedload of content has been added – take a look. (comments, feedback and proposals for additional content always welcome!)

For me, the most important content in the site states:

LLMs are fluent — but fluency without constraint is noise. Feed an invoice, a contract, or a form to a model and it will extract the key fields regardless of layout or language. The problem is what comes back.

Define a DSL for the expected result — line items, totals, addresses, dates — and instruct the model to respond in that form. A Raku Grammar validates the output, rejects malformed responses, and hands clean structured data to the rest of your pipeline. An inversion-of-control harness can tighten the prompt and retry on failure. The LLM provides the reading; the grammar provides the contract.

Well, now I read it back, that’s a bit hand wavy. Hopefully, the value of DSLs to LLM workflows is clear:

DSLs have a strong case in LLM workflows because they reduce ambiguity, constrain generation to valid domain actions, and make outputs easier to validate, reuse, and execute. Recent work and industry writing also suggest DSLs help LLMs produce more reliable structured outputs and can improve repository-scale code generation when the model is adapted to the language.

A DSL lets you express intent in domain terms rather than in generic prose or low-level code, which lowers the search space for the model and reduces the chance of drifting into irrelevant syntax or behavior. That matters because LLMs are probabilistic generators: the more structure you give them, the less room there is for accidental invention. In practice, this means fewer correction cycles and less post-generation cleanup.

DSLs can encode rules directly in the language, so some errors become impossible or at least easier to catch before execution. Compared with free-form prompting, a DSL gives you a built-in contract: valid shapes, allowed values, and domain-specific constraints are part of the workflow itself. That is especially useful when the output must be machine-executed, audited, or handed off to another system.

In LLM workflows, DSLs work well as an intermediate representation between natural language and final execution. The LLM can translate intent into a compact, structured form; downstream tooling can then validate, transform, or run it deterministically. This pattern is attractive for agents and pipeline systems because it turns some of the “reasoning” burden into explicit workflow specification.

The main cost is design effort: a DSL has to be worth learning, documenting, and maintaining. If the domain is broad or fast-changing, a rigid DSL can become a bottleneck, and the LLM may still make domain mistakes unless the language, examples, and validators are well designed. So DSLs are most compelling when the task is repetitive, high-value, and constrained enough that precision matters more than open-ended expression.

DSLs are strongest when the workflow has these properties: repeated task patterns, clear domain rules, expensive mistakes, and a need for reproducibility. Typical examples include agent pipelines, configuration generation, testing, data transformations, and repository-scale edits where structure is more important than prose flexibility. In those settings, a DSL can make LLM use feel less like improvisation and more like controlled compilation.

Slangify – spread the word

~librasteve

PS. I also feel it is time to bring this set of blog posts in line with the new Slangify look and feel. Goodbye “placeholder” DieSeL idea!

In the last two weeks there were quite a lot of discussions, posts, and articles about an OpenAI’s model disproving a conjecture by Paul Erdős, [OAI1]. Erdős posed the following unit distance problem in 1946:

What is the maximum number u(n) of unit-distance pairs (edges in the unit distance graph) determined by n points in the Euclidean plane?

Here are key elements of the original conjecture:

In graph-theoretic terms, this concerns the maximum edge density in a unit distance graph embeddable in the plane. The square lattice provided the foundational example for believing the exponent was close to 1.

This conjecture was widely believed for decades (with the square grid seen as the model for maximal constructions), but it was disproved in 2026 by an OpenAI model using algebraic or number-theoretic constructions that achieve a polynomial improvement (higher density than any square-grid-based approach).

For small n, other structures (e.g., triangular lattices or algebraic configurations like Moser spindles/rings) can be denser, but Erdős’ original asymptotic thinking centered on the square grid.

This is closely related to (but distinct from) the chromatic number of the plane (Hadwiger-Nelson problem), which also involves unit distance graphs.

Remark: Here is the K2,3 graph (which is a complete bipartite graph):

#% htmlGraph::Complete.new([2,3]).dot(vertex-shape => 'point'):svg

The OpenAI-vs-Erdős discussions “triggered” a particular path of learning-by-doing activities for me, which is outlined here:

powers-representations in “Math::NumberTheory”, [AAp2]This, second blog post (notebook) is the 13-th point of the list above — it shows how to make unit distance graphs using 2D lattices generated with complex number operations. (The first blog post is “Erdős unit distance conjecture examples — Part 1: Leaper graphs”, [AA1].)

use Math::NumberTheory;use Math::NumberTheory::Utilities;use Math::Nearest;use Math::DistanceFunctions;use NativeCall;use NativeHelpers::Array;use Data::Reshapers;use Graphviz::DOT::Chessboard;use NativeCall;

#%javascriptrequire.config({ paths: { d3: 'https://d3js.org/d3.v7.min'}});require(['d3'], function(d3) { console.log(d3);});

#%jsjs-d3-list-line-plot(10.rand xx 30, background => 'none')

my $title-color = 'Ivory';my $stroke-color = 'SlateGray';my $tooltip-color = 'LightBlue';my $tooltip-background-color = 'none';my $background = '#1F1F1F';my $color-scheme = 'schemeTableau10';my $edge-thickness = 3;my $vertex-size = 3;

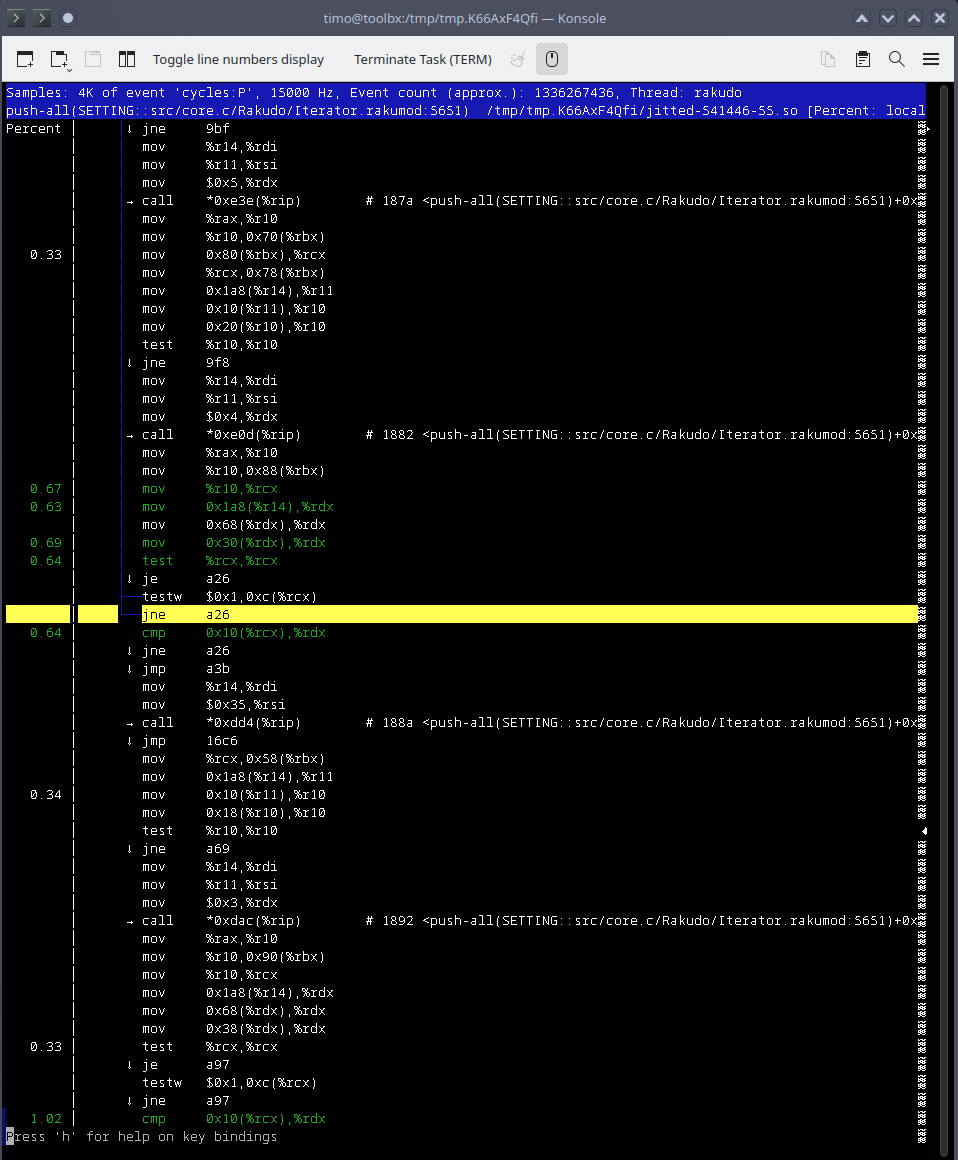

It has been observed that highly dense unit distance graphs can be made over Morse lattices, [PE1]. Morse lattices can be defined as additive subgroups of the complex numbers, ℂ, that are isomorphic to ℤ⁴.

Here we define a function that computes the edges of a Morse lattice graph:

sub unit-distance-graph-edges(Numeric:D $alpha, Int:D $m) { my $omega = -0.5 + $alpha * 1i; my @r = (-$m ... $m); my @pt = do gather { for @r -> $a { for @r -> $b { for @r -> $c { for @r -> $d { my $p = $a + $b * 1i + $c * $omega + $d * 1i * $omega; take [$p.re, $p.im] } } } } } @pt = @pt.map({ copy-to-carray($_, num64) }); my &nf = nearest(@pt); my %conn; for @pt.kv -> $k, $p { my @neighbors = &nf($p, (Whatever, 1.1)); %conn{$k} = @neighbors.grep({ abs(1 - euclidean-distance($_, $p)) ≤ 1e-5 }); } %conn = %conn.grep(*.value.elems); my @edges = %conn.kv.map( -> $k, @v { @v.map({ [@pt[$k.Int], $_].sort }) }).flat(1)».Array.unique; my @vertices = flatten(@edges, 1).unique; my %vertex-coords = @vertices.kv.map( -> $k, $v { $k.Str => $v }); my %vertex-index = %vertex-coords.map({ $_.value => $_.key }); @edges = @edges.map({ [%vertex-index{$_[0]}, %vertex-index{$_[1]}] }); return %(:@edges, vertex-coordinates => %vertex-coords, :%vertex-index);}

Compute the graph with particular parameters:

my %res = unit-distance-graph-edges(sqrt(3)/2, 3);deduce-type(%res)

# Struct([edges, vertex-coordinates, vertex-index], [Array, Hash, Hash])

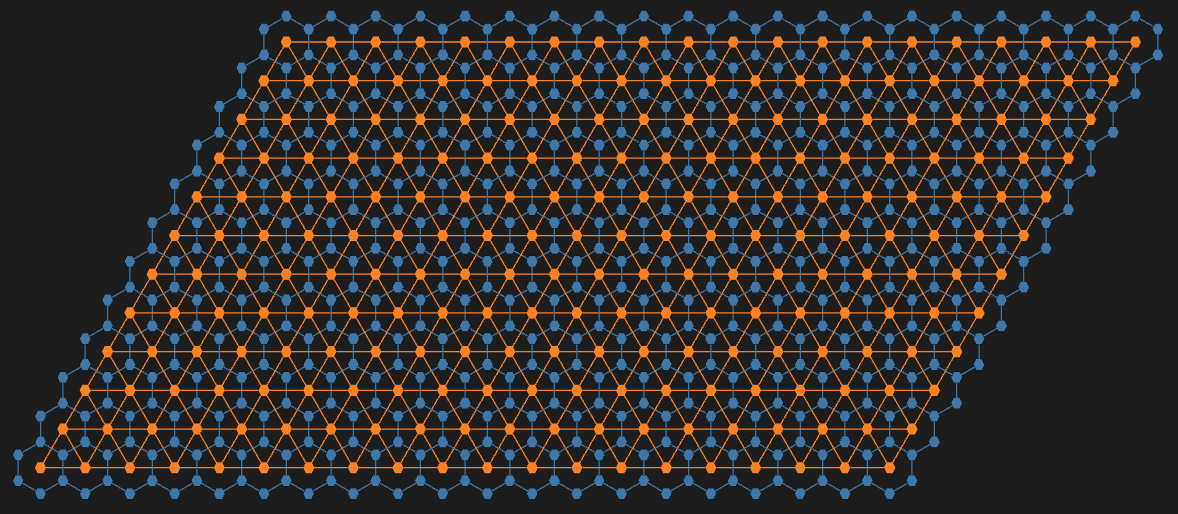

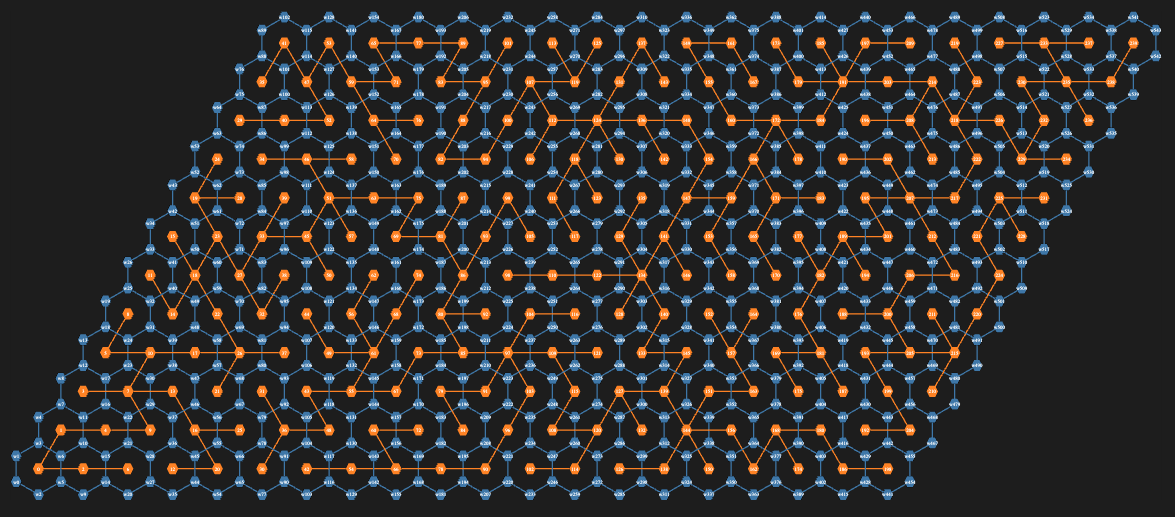

Here we get graph’s vertex coordinates and plot them:

#%jsmy @points = |%res<vertex-coordinates>.values;js-d3-list-plot( @points».Array, point-size => 3, background => 'none', :700width, :700height, :!axes, :$title-color, title => "Number of points : {@points.elems}")

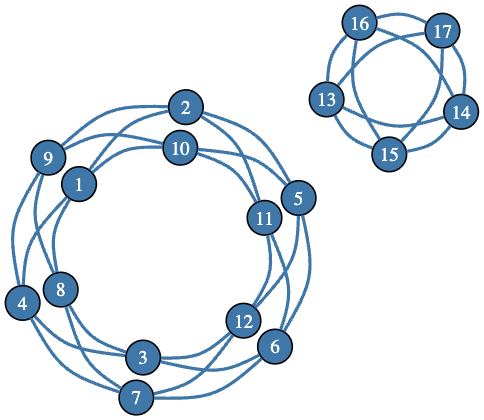

The obtained graphs are clearly with non-uniform distribution of the points. This prompts us to analyze the vertex degrees. Here we make the graph object:

my $g = Graph.new(edges => %res<edges>, vertex-coordinates => %res<vertex-coordinates>)

# Graph(vertexes => 2401, edges => 11760, directed => False)

Here is a tally of the vertex degrees:

tally($g.vertex-degree)

# {10 => 1000, 12 => 625, 4 => 4, 5 => 8, 6 => 84, 7 => 80, 8 => 500, 9 => 100}

And here is the vertex degrees distribution:

#% jsjs-d3-bar-chart( tally($g.vertex-degree).map({ <x y>.Array Z=> [$_.key, $_.value] })».Hash.sort(*<x>), :$background, :$title-color, title => 'Distribution of vertex degrees')

A faster way of computing the graphs above — in Raku — is to use a relation graph over the points of a Moser lattice, [PE1]:

sub omega($t) { exp(i * acos(1 - 1/2 * $t))}my $omega = omega(1); # or just: -0.5 + sqrt(3)/2 * 1imy @gen = 1, 1i, $omega, 1i * $omega; # or just: i <<**>> (0, 1, 4/3, 7/3)) })my @p = cross((-2 ... 2) xx 4).map({ dot-product($_.Array, @gen) });my $gML = Graph::Relation.new({abs(abs(@p[$^a] - @p[$^b]) - 1) ≤ 1e-8}, ^@p.elems, as => {.Str}, vertex-coordinates => @p.kv.map(-> $k, $v { $k => [$v.re, $v.im]}).Hash);$gML

# Graph(vertexes => 625, edges => 2800, directed => False)

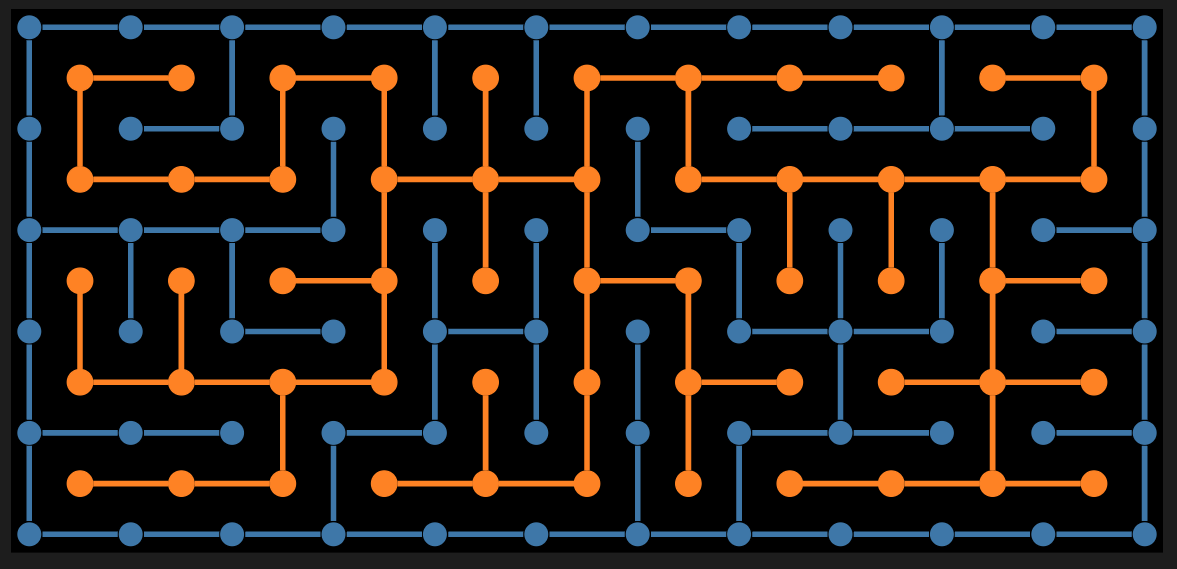

Plot the graph:

#% html$gML.dot( :!vertex-labels, vertex-color => 'Orange', vertex-fill-color => 'Orange', vertex-shape => 'point', vertex-width => 0.1, vertex-height => 0.1, edge-width => 0.4, edge-color => 'SteelBlue', :8graph-size, engine => 'neato'):svg

Let us make highlights on the graph based on vertex degrees:

my @highlight = $g.vertex-degree(:p).classify(*.value).map({ $_.value».key }).sort(-*.elems);deduce-type(@highlight)

# Tuple([Vector(Atom((Str)), 1000), Vector(Atom((Str)), 625), Vector(Atom((Str)), 500), Vector(Atom((Str)), 100), Vector(Atom((Str)), 84), Vector(Atom((Str)), 80), Vector(Atom((Str)), 8), Vector(Atom((Str)), 4)])

Plot the graph using Graphviz DOT (and related layout engines):

#% html$g.dot( :!vertex-labels, :@highlight, vertex-fill-color => 'orange', vertex-shape => 'point', vertex-width => 0.1, vertex-height => 0.1, edge-width => 0.2, :8graph-size, engine => 'neato'):svg

Bubble charts using “JavaScript::D3”:

#% jsmy %degrees = $g.vertex-degree():p;my @data = %res<vertex-coordinates>.map({ x => $_.value.head, y => $_.value.tail, z => %degrees{$_.key}, group => %degrees{$_.key}.Str })».Hash;@data .= sort({ -$_<z> * 100 + 10 * norm($_<x y>) + cosine-distance($_<x y>, [0, 1]) });my %opts = :500width, :500height, background => 'none', z-range-min => 6, z-range-max => 12, opacity => 0.4, color-palette => 'Tableau10', :!axes, :!tooltip, :!legends, :20margins ;js-d3-bubble-chart(@data.sort(*<z>), |%opts, z-range-min => 8, opacity => 0.2, color-palette => 'Blues[7]', stroke-color => 'Black')~js-d3-bubble-chart(@data, |%opts, z-range-min => 10, z-range-max => 24, opacity => 0.3, color-palette => 'RdBu[3]', stroke-color => 'none')~js-d3-bubble-chart(@data, |%opts, color-palette => 'Spectral[3]', stroke-color => 'none')

Remark: The z-ranges, opacities, and color palettes were chosen after 10 to 20 experiments in order to reveal the graph structure or configuration and produce compelling, attractive plots.

[AA1] Anton Antonov, “Erdős unit distance conjecture examples — Part 1: Leaper graphs”, (2026), RakuForPrediction at WordPress.

[DC1] Davide Castelvecchi, “AI cracks 80-year-old mathematics challenge — researchers are astonished”, Nature.com, DOI: https://doi.org/10.1038/d41586-026-01651-0.

[PE1] Peter Engel et al., “Diverse beam search to find densest-known planar unit distance graphs”, arXiv:2406.15317 [math.CO], (2025), arxiv.org.

[OAI1] OpenAI, “An OpenAI model has disproved a central conjecture in discrete geometry”, (2026), openai.com.

[PB1] Peter Brass et al., Research Problems in Discrete Geometry, 2005, Springer. ISBN-13: 978-0387-23815-8.

[AAn1] Anton Antonov, “Unit distance graph animations”, (2026), Wolfram Community.

[EPn1] Ed Pegg, “OpenAI disproves Erdős unit distance conjecture”, (2026), Wolfram Community.

[AAp1] Anton Antonov, Graph, Raku package, (2024-2026), GitHub/antononcube.

[AAp2] Anton Antonov, Math::NumberTheory, Raku package, (2025-2026), GitHub/antononcube.

[AAp3] Anton Antonov, Math::DistanceFunctions, Raku package, (2024-2026), GitHub/antononcube.

[AAp4] Anton Antonov, Math::DistanceFunctions::Native, Raku package, (2024), GitHub/antononcube.

[AAp5] Anton Antonov, Graphviz::DOT::Chessboard, Raku package, (2024), GitHub/antononcube.

[AAp6] Anton Antonov, Image::Markup::Utilities, Raku package, (2023-2026), GitHub/antononcube.

In the last two weeks there were quite a lot of discussions, posts, and articles about an OpenAI’s model disproving a conjecture by Paul Erdős, [OAI1]. Erdős posed the following unit distance problem in 1946:

What is the maximum number u(n) of unit-distance pairs (edges in the unit distance graph) determined by points in the Euclidean plane?

Here are key elements of the original conjecture:

In graph-theoretic terms, this concerns the maximum edge density in a unit distance graph embeddable in the plane. The square lattice provided the foundational example for believing the exponent was close to 1.

This conjecture was widely believed for decades (with the square grid seen as the model for maximal constructions), but it was disproved in 2026 by an OpenAI model, [OAI1], using algebraic or number-theoretic constructions that achieve a polynomial improvement (higher density than any square-grid-based approach).

For small , other structures (e.g., triangular lattices or algebraic configurations like Moser spindles/rings) can be denser, but Erdős’ original asymptotic thinking centered on the square grid.

This is closely related to (but distinct from) the chromatic number of the plane (Hadwiger-Nelson problem), which also involves unit distance graphs.

Remark: Here is the K(2,3) graph (which is a complete bipartite graph):

#% htmlGraph::Complete.new([2,3]).dot(vertex-shape => 'point'):svg

The OpenAI-vs-Erdős discussions “triggered” a particular path of learning-by-doing activities for me, which is outlined here:

powers-representations in “Math::NumberTheory”, [AAp2]This document (notebook) is the 11-th point of the list above — it shows how to make, plot, and animate collections of unit distance leaper graphs.

use Graph;use Graphviz::DOT::Chessboard;use Math::NumberTheory;use Math::DistanceFunctions;use Data::Reshapers;use Image::Markup::Utilities;

my $title-color = 'Ivory';my $stroke-color = 'SlateGray';my $tooltip-color = 'LightBlue';my $tooltip-background-color = 'none';my $background = '#1F1F1F';my $color-scheme = 'schemeTableau10';my $edge-thickness = 3;my $vertex-size = 3;

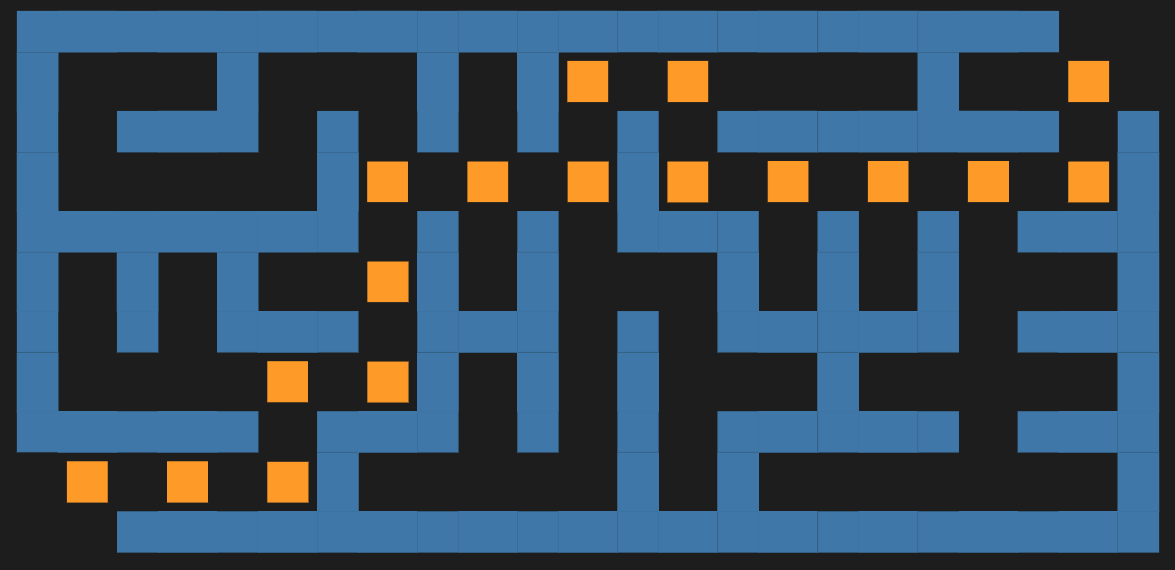

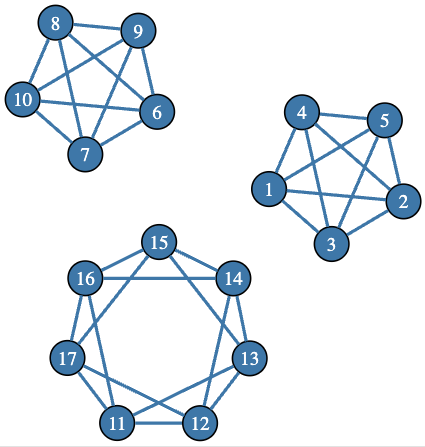

In order to construct square grid graphs with edges that are of length 1, we consider the family of leaper graphs. The Leaper graph generalizes the Knight Tour graph. Here are the moves of Camel graph, Flamingo graph, and Zebra graph, which are leaper graphs parameterized with , , and , respectively:

#% htmlmy %opts-brown = black-square-color => 'SandyBrown', white-square-color => 'Moccasin', :65font-size;my $fenC = '8/2N1N2/8/N5N1/3n3/N5N1/8/2N2N2';my $c = dot-chessboard($fenC, :7r, :7c, :4size, background=>'none', |%opts-brown, :svg);$c .= subst(/ '♞' | '♘'/, '🐪', :g);my %opts-blue = black-square-color => 'DarkSeaGreen', white-square-color => 'Wheat', :65font-size;my $fenF = '8/N1N5/8/8/8/6N1/1n6/6N1';my $f = dot-chessboard($fenF, :7r, :7c, :4size, background=>'none', |%opts-blue, :svg);$f .= subst(/ '♞' | '♘'/, '🦩', :g);my %opts-green = black-square-color => '#779556ff', white-square-color => '#ebedb7', :65font-size;my $fenZ = '8/1N3N1/N5N1/8/3n3/8/N5N1/1N3N1';my $z = dot-chessboard($fenZ, :7r, :7c, :4size, background=>'none', |%opts-green, :svg);$z .= subst(/ '♞' | '♘'/, '🦓', :g);$c ~ $f ~ $z

Remark: From the code and plots above it can be seen that the package “Graphviz::DOT::Chessboard”, [AAp5], can handle chess boards and FEN notations with non-standard sizes.

We can ask ourselves:

To answer the first question, we observe that we can rescale the “chess board” of the leaper graph in such a way that each leap has “over air” distance of 1. For example, the integer coordinates of an board can be divided by and that would make leaper graphs parameterized with and to have edges of unit length.

powers-representations(25, 2, 2)

# ((0 5) (3 4))

my ($rows, $columns) = (8, 8);my $g1 = Graph::Leaper.new(moves => [0, 5], :$rows, :$columns);my $g2 = Graph::Leaper.new(moves => [3, 4], :$rows, :$columns);my $g = $g1.union($g2)

# Graph(vertexes => 64, edges => 128, directed => False)

Here we rescale the vertex coordinates in order to get edges with unit length:

sink $g.vertex-coordinates = $g.vertex-coordinates.map({ $_.key => $_.value <</>> 5}).Hash

Plot the graph:

#% html$g.dot( engine => 'neato', graph-size => 5, vertex-shape => 'point', vertex-width => 0.02, vertex-height => 0.02, vertex-color => 'SlateGray', vertex-fill-color => 'SlateGray', edge-thickness => 0.2):svg

Let us convince ourselves that the edges of that graph have unit length:

$g.edgesandthen .map({ $g.vertex-coordinates{$_.key}, $g.vertex-coordinates{$_.value} })andthen .map({ euclidean-distance(|$_) })andthen .Listandthen (min => $_.min, max => $_.max)

# (min => 0.9999999999999998 max => 1)

That was just one, a relatively small graph. Can we find other leaper graphs based on representations with two or more terms? Here we search for integers that:

(1...10_000).grep({ my @fs = |factor-integer($_); @fs.elems == 1 && @fs.head.tail == 2 }).map({ $_ => powers-representations($_, 2, 2) }).grep({ $_.value.elems ≥ 2 })

# (25 => ((0 5) (3 4)) 169 => ((0 13) (5 12)) 289 => ((0 17) (8 15)) 841 => ((0 29) (20 21)) 1369 => ((0 37) (12 35)) 1681 => ((0 41) (9 40)) 2809 => ((0 53) (28 45)) 3721 => ((0 61) (11 60)) 5329 => ((0 73) (48 55)) 7921 => ((0 89) (39 80)) 9409 => ((0 97) (65 72)))

For example, if we pick the third smallest number of the ones found, , we can make two leaper graphs and combine them as above.

my $g1 = Graph::Leaper.new(moves => [0, 17], :27rows, :27columns);my $g2 = Graph::Leaper.new(moves => [8, 15], :27rows, :27columns);my $g = $g1.union($g2)

# Graph(vertexes => 729, edges => 1452, directed => False)

Here we rescale vertex coordinates (in order to get unit length edges):

sink $g.vertex-coordinates = $g.vertex-coordinates.map({ $_.key => $_.value <</>> 17 }).Hash;

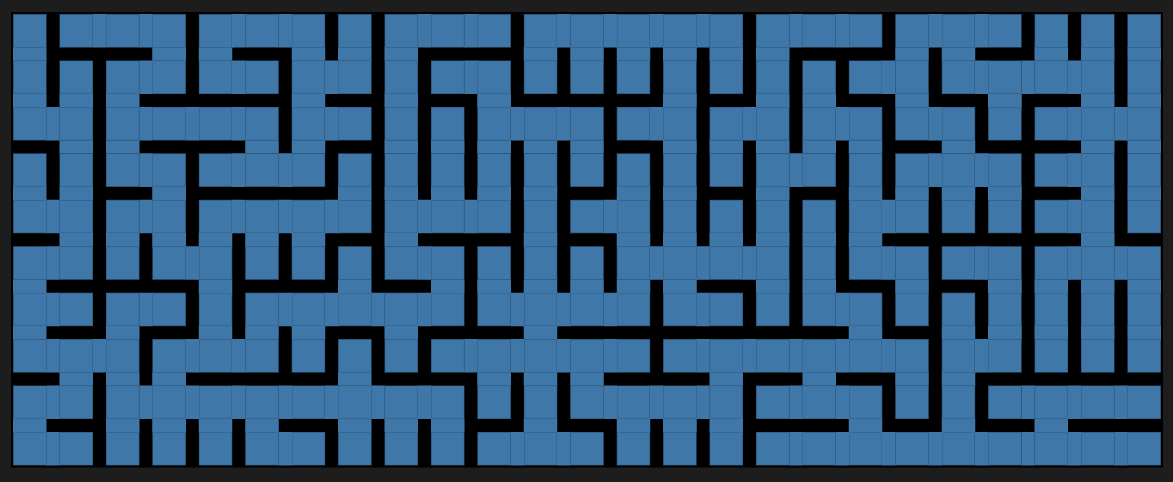

Plot the graph:

#% html$g.dot(engine => 'neato', graph-size => 8, edge-thickness => 0.5, vertex-shape => 'point', vertex-width => 0.1, vertex-height => 0.1, :!vertex-labels ):svg

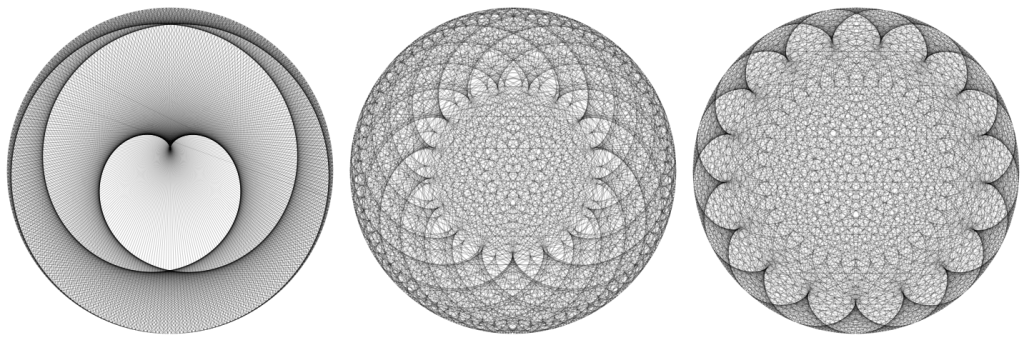

We can just make leaper graphs in order to produce some interesting to look at patterns of lines. For example:

#% htmlmy $g = Graph::Leaper.new(moves => [31, 21], :45rows, :45columns);$g.dot(engine => 'neato', :8graph-size, edge-thickness => 0.4, vertex-shape => 'point', :0vertex-width, :0vertex-height):svg

Let us make an animation of images of leaper graphs. First we derive the graph plots:

my @all-moves = (1, 2 ... 35) X (1, 2 ... 21);@all-moves .= grep({ are-coprime(|$_) && $_.all ≥ 6 && $_.sum ≥ 30 });say 'all-moves : ', @all-moves.elems;my @rules = @all-moves.pairs.map({ my $i = $_.key; my @moves = |$_.value; say (:$i) if $i %% 20; my $g = Graph::Leaper.new(:@moves, :45rows, :45columns); $i => $g.dot( engine => 'neato', :8graph-size, vertex-shape => 'point', vertex-width => 0, vertex-height => 0, edge-thickness => 0.23, edge-color => 'ivory', background => 'black', ):svg});deduce-type(@rules)# ≈2m

# all-moves : 185# i => 0# i => 20# i => 40# i => 60# i => 80# i => 100# i => 120# i => 140# i => 160# i => 180

# Vector(Pair(Atom((Int)), Atom((Str))), 185)

Sort the graph plots according to the sums of the squares of their leaps (and the take SVG values):

my @imgs = @rules.sort({ sum(@all-moves[|$_.key] <<**>> 2) })».value;deduce-type(@imgs)

# Vector(Atom((Str)), 185)

Make an animation with the list of images (SVG strings):

#%htmlsink my $res = list-animate(@imgs, :10duration, repeat-count => 'indefinite')# ≈11s without rendering# ≈3m with rendering

Export the obtained SVG animation into one file:

spurt('./img/leaper-graphs-from-45-35-21-coprime-30sum.svg', $res)

# True

In general, the SVG animation file can be quite large. (E.g. ≈50MB, or 200MB.) That is why we might prefer making PNG or JPEG images for the graphs plots and then combining them into a movie.

Export each SVG graph plot (into a directory of frames), then convert the SVG file into a PNG image using rsvg-convert:

sink for @rules.sort({ sum(@all-moves[|$_.key] <<**>> 2) }).kv -> $index, $p { my $svg-content = $p.value; my $filename = sprintf "./img/leaper-graph-frames/frame%05d.svg", $index; say (:$filename) if $index %% 20; spurt $filename, $svg-content; my $png-filename = sprintf "./img/leaper-graph-frames/frame-%05d.png", $index; shell "rsvg-convert -w 800 -h 800 -o $png-filename $filename";}# ≈45s

# filename => ./img/leaper-graph-frames/frame00000.svg# filename => ./img/leaper-graph-frames/frame00020.svg# filename => ./img/leaper-graph-frames/frame00040.svg# filename => ./img/leaper-graph-frames/frame00060.svg# filename => ./img/leaper-graph-frames/frame00080.svg# filename => ./img/leaper-graph-frames/frame00100.svg# filename => ./img/leaper-graph-frames/frame00120.svg# filename => ./img/leaper-graph-frames/frame00140.svg# filename => ./img/leaper-graph-frames/frame00160.svg# filename => ./img/leaper-graph-frames/frame00180.svg

Make a movie using FFmpeg:

#% bash# ffmpeg -framerate 6 -pattern_type glob -i './img/leaper-graph-frames/frame-*.png' -c:v libx264 -pix_fmt yuv420p -crf 23 -movflags +faststart ./img/output.mp4

The animation can be seen here (Imgur).

[OAI1] OpenAI, “An OpenAI model has disproved a central conjecture in discrete geometry”, (2026), openai.com.

[AAn1] Anton Antonov, “Unit distance graph animations”, (2026), Wolfram Community.

[EPn1] Ed Pegg, “OpenAI disproves Erdős unit distance conjecture”, (2026), Wolfram Community.

[AAp1] Anton Antonov, Graph, Raku package, (2024-2026), GitHub/antononcube.

[AAp2] Anton Antonov, Math::NumberTheory, Raku package, (2025-2026), GitHub/antononcube.

[AAp3] Anton Antonov, Math::DistanceFunctions, Raku package, (2024-2026), GitHub/antononcube.

[AAp4] Anton Antonov, Math::DistanceFunctions::Native, Raku package, (2024), GitHub/antononcube.

[AAp5] Anton Antonov, Graphviz::DOT::Chessboard, Raku package, (2024), GitHub/antononcube.

[AAp6] Anton Antonov, Image::Markup::Utilities, Raku package, (2023-2026), GitHub/antononcube.

It is just over 20 days before the start of The Perl and Raku Conference 2026 in Greenville, SC, USA.

The Perl and Raku Conference or TPRC (formerly known as YAPC::NA) is a high-quality, inexpensive technical conference with its roots in the Perl Mongers user groups. The conference celebrates the Perl and Raku programming languages. It is meant to be accessible to anyone, regardless of experience, yet valuable to even the most skilled programmers. Each year, the conference attracts programmers from around the world.

The conference planning team needs to know if you are coming so they can make sure and have everything ready for you (t-shirt, lunches, name badge, planetarium tickets, etc). Please register today!

Registration: https://tprc.us

Weekly Challenge #376 is available for your inspection.

SIBL shows the path from this ugly (perl) mess to this much better (raku) script. An eloquent demonstration of Raku’s expressiveness.

lizmat reminds us of the advantages of Moving printf formats forward, the stated plan was to reimplement in RakuAST with the multiple benefits described. This upgrade has now landed in sprintf in Raku latest as announced in the previous episode. Yay!

A new way of creating strings from a given set of values and format string (in other words printf functionality) has been implemented using RakuAST in the Raku Programming Language, making it up to 30x as fast.

Anton Antonov adds some heft to the DSL conversation…

I continue to feed the DSL fire…

Tool to check Linux services configuration files compliance and reddit comments.

The gentoo linux wiki adds a page for raku: https://wiki.gentoo.org/wiki/Raku

Frequently, this section is inspired by short exchanges on the IRC/Discord chat. Today is no exception. Thanks to avuserow for the inspiration.

Prior to this, I had (wrongly) assumed that the $scale argument in routine round()is confined to a decimal, like this:

multi round(Numeric(Cool), $scale = 1)

multi method round(Cool:D: $scale = 1)

say 1.7.round; # OUTPUT: «2»

say 1.07.round(0.1); # OUTPUT: «1.1»

say 21.round(10); # OUTPUT: «20»

Then I saw this and inquired as to its use case: being able to round to the nearest 0.25 via $x.round(0.25) is great for eg font sizes.

(25/11).round(0.25)Lightbulb moment. Apparently Raku and Ruby are among a few languages with this syntax. Here are some more examples for -Ofun:

$x.round(0.25) # typography

$x.round(8) # snap to grid

$x.round(10) # axis ticks

$x.round(15) # calendar time

$x.round(0.2) # star rating

$x.round(2.5) # gym weight plates

$x.round(1024) # network packet payload

$x.round(22.5) # map bearings

$x.round(1/16) # musical timing

Your contribution is welcome, please make a gist and share via the #raku channel on IRC or Discord.

Humberto

Humberto

It is great to see the teamwork on RakuAST bring real performance benefits – thanks to lizmat for reminding us of the plan she set out to do and making steady progress to the stated goal. And to the whole RakuAST effort behind that.

Please keep staying safe and healthy, and keep up the good work! Even after week 70 of hopefully only 209.

~librasteve

Previous < = > Next

In the last episode, I made a DSL in both Python and Raku using Claude Code. If you want to know the score – then check out the previous post.

At the start of this segment of my journey, I resolved to commit to AI tooling since that is the reality for all devs these days. And I chose Antropic’s Claude Code as the most typical tool for effective code development.

OK after some mucking about I have the Claude App running on macOS and it is running claude code in a project directory. I am following the Getting Started. Good that all works – just a couple of hiccups for me to get to grips with a new tool – typical of any change of that magnitude.

Per my previous, I anyway expected Claude to do a good job in designing my (very simple) Invoice Domain Specific Language (DSL). Cool. Just needed a prompt like:

Bingo.

On the same basis – for there is much Python out there – I anyway expected Claude to do a bang up job of making a Python DSL parser.

Again bingo. In fact Claude offered me the choice of building all in pure Python or of using the Lark parser module. I chose Lark since that seems to be a fairer apples vs. apples comparison.

Did a great job of guiding me though .venv, pip3 and so on.

When it came to Raku, I was less confident. Sure there are ~1500 Raku questions and answers on Stack Overflow, 200 Raku code examples on Rosetta Code and very comprehensive docs and tutorials, but tbh I thought that I may need to make a custom AI cheat sheet (ie a context) to get even a half decent result. Raku is not new (launched in 2015 as perl6), but it is still a minority sport.

I was extremely, pleasantly surprised to find that Claude proposed a Raku Grammar solution and practically wrote the “perfect” solution (certainly better than the code I would have written in a minute or two in one pass.

Blown away!

I anyway wanted to post the side by side comparison of Python and Raku as a webpage using the Raku HARC Stack … that is HTMX, Air, Red and Cro. Why, well the `Air::Plugin::Hilite` module supports any language (ie. Python) as well as Raku, and as we shall see is oh so cool for just making a grid that looks good.

And then, I thought, lets use my new found Claude skills to do that and share the process and review the result – ie. as another proof point that AI tooling (well, at least Claude Code) works as smoothly with Raku as more popular programming languages.

lizmat kindly agreed for me to adopt the name Slangify for this new site and register the domain … since I think we need a presence for Raku DSL stuff on the interweb going forward

Now, an astute reader will ask “why bother to ask Claude to make a website using the Raku Air module? why not just have Claude build it direct to HTML?” Indeed for simple sites such as these, that is a very good option.

It’s kind of a special case of “why have Claude (as with any AI) write in any human readable language as an intermediate? after all – its only going to compile to machine code anyway?”. The two sides of that coin are:

So really it boils down to which language you the human prefer to use for the interaction.

In the case of a website, I prefer to deal at the level of Raku and Air. Others may prefer Ruby and Rails by the same token. Or similar. I do not want to deal with HTML, CSS, JS level abstractions since (i) Claude is going to need a lot of coaching to provide the Locality of Behaviour I prefer and (ii) Claude was trained on a very large set of very messy website code in the wild.

Now that is out of the way, let’s just do it. The following is a direct copy of the Claude transcript to help undecideds see how it looks.

make a new Raku website project slangify.org – start from git clone https://github.com/librasteve/cragcli-info so it should already work with mi6

I’ll explore the template repository to understand its structure before planning the new project.

Ran agent

Haiku 4.5

Explore cragcli-info repo structure

Good exploration. Let me ask a few clarifying questions before writing the plan.

Used ToolSearch

Asked

Location

Where should the project be created on disk?

ok, use slangify.org as the home dir – propose a quay.io name in line with the current pattern

What Docker registry should CI push images to?

quay.io/librasteve (Recommended)

What initial content/purpose should the site have?

Minimal stub (Recommended)

Now let me read the actual source files from the clone to understand what needs changing.

Read 13 files, ran a command

Now I have everything I need. Let me write the plan.

Created a file, used a tool

Proposed plan

Project setup

Starting with the clone and file rename.

Ran

Clone repo, remove origin remote, rename module file

Now update all the files:

Created 2 files, ran a command, read a file

Now update the runner scripts and config files:

Read 7 files, edited 6 files, created a file

Now make the initial commit:

Ran 2 commands

Done. Here’s a summary of what was created in slangify.org/:

File Change

To run locally: raku -Ilib air-serve.raku (after zef install –deps-only .). To build and push the Docker image, run scripts/cibuild.sh from a GitHub Actions workflow with QUAY_USERNAME/QUAY_PASSWORD secrets set.

So, that was it. 2 commands and about 12 minutes of elapsed time.

Here’s the result: https://github.com/librasteve/slangify.org (check out the initial commit to see the first result)

I took a quick peek at Claude’s Raku output – nothing awry. So I decided to push forward to see how it looks.

I had to do the following commands to Claude to complete the job:

script defer src="https://cloud.umami.is/script.js" data-website-id="4464d54a-3dbe-4f79-8d45-1ef4f22cd677"In addition to the Claude aspects of the build, I did these things:

slangify.org

sudo docker compose downsudo docker compose pullsudo docker compose up -dNo need to setup the server crontab, since that already covers all sites.

If you are paying attention, you will recall the initial idea was to have the Python and Raku DSL parser code side by side in a single page on the site.

Here’s the initial site source code, using Air::Functional:

Hopefully you can see why I prefer the compact, functional approach of Air over the HTML / CSS noise.

So, I added in (manually this time) Air::Plugin::Hilite and the two code examples (with some judicious spaces on the Raku side.

Here’s the final source code (some folding):

See for yourself over at https://slangify.org/comparison

The above flow went as described – very smooth – but I couldn’t help wonder why the theme colour was Pico CSS green, even though I had specified blue. Turns out that the SCSS build on my local machine was failing…

sass styles.scss styles.css

Error: Can't find stylesheet to import. ╷1 │ ┌ @use "node_modules/@picocss/pico/scss" with (2 │ │ $theme-color: "blue"3 │ │ ); │ └─^ ╵ styles.scss 1:1 root stylesheet

okaay – looks like I need to npm install picocss – yeah Claude forgot to copy over the npm picocss module (!)

Final note – I have now improved the Air docs to cover dart and pico CSS setup.

Hopefully see you here for the next gripping episode!

~librasteve

The Raku package “DSL::Examples”, [AAp1], is a “data package” with examples of DSL commands translations to programming code.

The DSL examples are suitable for LLM few-shot training. The sub llm-example-function provided by “LLM::Functions”, [AAp3], can be effectively used to create translation functions utilizing those examples.

The utilization of such LLM-translation functions is exemplified below. Also in the presentation “Robust LLM pipelines (Mathematica, Python, Raku)”, [AAv1]:

Similar translations — with much less computational resources — are achieved with grammar-based DSL translators; see “DSL::Translators”, [AAp2]. The package “LLM::Resources”, [AAp4], has LLM-graphs for code generation that utilize the DSL examples of this package.

Get all examples:

use DSL::Examples;use Data::TypeSystem;dsl-examples() ==> deduce-type()

# Assoc(Atom((Str)), Tuple([Assoc(Atom((Str)), Tuple([Assoc(Atom((Str)), Atom((Str)), 17), Assoc(Atom((Str)), Atom((Str)), 14), Assoc(Atom((Str)), Atom((Str)), 32), Assoc(Atom((Str)), Atom((Str)), 20), Assoc(Atom((Str)), Atom((Str)), 20), Assoc(Atom((Str)), Atom((Str)), 27), Assoc(Atom((Str)), Atom((Str)), 6)]), 7), Assoc(Atom((Str)), Tuple([Assoc(Atom((Str)), Atom((Str)), 10), Assoc(Atom((Str)), Atom((Str)), 26), Assoc(Atom((Str)), Atom((Str)), 17), Assoc(Atom((Str)), Atom((Str)), 20)]), 4), Assoc(Atom((Str)), Tuple([Assoc(Atom((Str)), Atom((Str)), 15), Assoc(Atom((Str)), Atom((Str)), 23), Assoc(Atom((Str)), Atom((Str)), 33), Assoc(Atom((Str)), Atom((Str)), 20)]), 4), Assoc(Atom((Str)), Tuple([Assoc(Atom((Str)), Atom((Str)), 15), Assoc(Atom((Str)), Atom((Str)), 10), Assoc(Atom((Str)), Atom((Str)), 20), Assoc(Atom((Str)), Atom((Str)), 6)]), 4)]), 4)

Tabulate all translation languages and available workflow examples:

use Data::Translators;dsl-examples(from => 'English').map({ $_.key X $_.value.keys }).flat(1).map({ <language workflow> Z=> $_ })».Hash.sort.Array==> to-dataset()==> to-html(field-names => <language workflow>)

| language | workflow |

|---|---|

| Python | LSAMon |

| Python | QRMon |

| Python | SMRMon |

| Python | pandas |

| R | DataReshaping |

| R | LSAMon |

| R | QRMon |

| R | SMRMon |

| Raku | DataReshaping |

| Raku | LSAMon |

| Raku | SMRMon |

| Raku | TriesWithFrequencies |

| WL | ClCon |

| WL | DataReshaping |

| WL | LSAMon |

| WL | QRMon |

| WL | SMRMon |

| WL | Tabular |

| WL | TriesWithFrequencies |

Note that for dsl-examples the language to translate from is specified. Currently, the package has DSL examples for Bulgarian, English, Portuguese, and Russian (being from-languages.)

Get the examples for Latent Semantic Analysis (LSA) Monadic pipeline segments in Python:

dsl-examples('Python', 'LSAMon') ==> deduce-type(:tally)

# Assoc(Atom((Str)), Atom((Str)), 15)

Make an LLM example function for translation of LSA workflow building commands:

use LLM::Functions;my &llm-pipeline-segment = llm-example-function(dsl-examples()<WL><LSAMon>);

Run the LLM function over a list of DSL commands:

my @commands = "use the dataset aAbstracts","make the document-term matrix without stemming","exract 40 topics using the method non-negative matrix factorization","show the topics";@commands.map({ .&llm-pipeline-segment }).map({ .subst(/:i Output ':'?/):g }).join("⟹\n")

# LSAMonUnit[aAbstracts]⟹# LSAMonMakeDocumentTermMatrix["StemmingRules"->{},"StopWords"->Automatic]⟹# LSAMonExtractTopics["NumberOfTopics" -> 40, Method -> "NNMF"]⟹# LSAMonEchoTopicsTable[]

Same workflow specified in Bulgarian:

my &llm-pipeline-segment-bg = llm-example-function(dsl-examples(from => 'Bulgarian')<WL><LSAMon>);my @commands = "използавай данните aAbstracts","направи документ-терм матрицата без да използаваш стъблата на думите","намери 40 теми ползвайки методата не-отрицателна матрична факторизация","покажи темите";@commands.map({ .&llm-pipeline-segment-bg }).map({ .subst(/:i Output ':'?/):g }).join("⟹\n")

# LSAMonUnit[aAbstracts]⟹# LSAMonMakeDocumentTermMatrix["StemmingRules"->{}]⟹# LSAMonExtractTopics["NumberOfTopics"->40,Method->"NNMF"]⟹# LSAMonEchoTopicsTable[]

There are several ways to organize the DSL examples with respect to the from-languages:

| Type | Comment | Currently used |

|---|---|---|

| Have a separate file for each from-langauge | Convenient editing and refinement | Yes |

| One file of all examples; from-langauge is a key for each workflow | Can be produces with the separate files | No |

| Keep English-only DSL examples and use dictionaries of command translations to English | Does not train the LLM directly with the from-language | Dictionaries are kept for reference |

This Jupyter notebook has a workflow for the translation of the English DSL examples into other languages.

[AAp1] Anton Antonov, DSL::Examples, Raku package, (2024-2026), GitHub/antononcube.

[AAp2] Anton Antonov, DSL::Translators, Raku package, (2020-2026), GitHub/antononcube.

[AAp3] Anton Antonov, LLM::Functions, Raku package, (2023-2026), GitHub/antononcube.

[AAp4] Anton Antonov, LLM::Resources, Raku package, (2026), GitHub/antononcube.

[AAv1] Anton Antonov, “Robust LLM pipelines (Mathematica, Python, Raku)”, (2024), YouTube/AAA4prediction.

It is just over 30 days before the start of The Perl and Raku Conference 2026 in Greenville, SC, USA. Even if you are a procrastinator, it’s time to make your plans to attend!

Early bird pricing for registration will end on May 28. Special conference pricing for our block of hotel rooms also expires on May 28. So it is definitely time to get yourself registered and to get your room reserved.

The conference planning team needs to know if you are coming so they can make sure and have everything ready for you (t-shirt, lunches, name badge, planetarium tickets, etc). Please register today!

Registration: https://tprc.us

On behalf of the Rakudo development team, I’m happy to announce the May 2026 release of Rakudo #193 – also known as 2026.05. Rakudo is an implementation of the Raku language. [says Will Coleda]

The following people contributed to this release:

Nick Logan, Elizabeth Mattijsen, Will Coleda, Daniel Green, librasteve, Patrick Böker, Timo Paulssen

Editor’s note: I chose not to abridge this very long list of RakuAST items – since one key takeway from this release is that RakuAST work is gathering a head of steam!

Sparrow6 integration. Ansible replaced by Sparrow6 for Ditana COSMIC (Wayland) configuration management and testing, with substantial contributions from Alexey.

https://github.com/acrion/ditana-installer/releases/tag/v0.9.3

Anton Antonov has served up a double helping of predictions this week…

Elizabeth Mattijsen makes some SUBTLE CHANGES to sprintf in 6.e … With RakuAST getting closer to be the default backend, it was time to me to revisit the work I did on sprintf about 3 years ago (but which was halted because of the inability to be able to use synthetically generated code in precomp files. Which is now fixed, thanks to @ugexe++).

Weekly Challenge #375 is available for your betterment.

Alot of times, when coming to Raku from non-Perl languages, folk wrestle with the Raku sigils:- $calar, @rray, %ash and &allable.

One straightforward and obvious benefit comes in the form of Str interpolation. Let’s take a look…

my $name = "Ada"; say "Hello, $name";

# Hello, Ada

my $age = 42; say "Age: $age";

# Age: 42

my @colors = <red green blue>; say "@colors";

# red green blue

my @colors = <red green blue>; say "First: @colors[0]";

# First: red

my %user = name => "Ada"; say "Name: %user<name>";

# Name: Ada

my $x = 5; my $y = 7; say "$x + $y = {$x + $y}";

# 5 + 7 = 12

my $animal = "cat"; say "A {$animal.uc} naps";

# A CAT naps

my $price = 9.99; say "Price: \$$price";

# Price: $9.99

my $lang = "Raku"; say qq:to/END/;

Learning $lang is fun

END

# multiline interpolation

sub greet { "Hello" }; say "&greet()";

# Hello

my $name = "Ada"; say 'Hello, $name';

# no interpolation in single quotes

"" or the qq construct introduce interpolation'' or q, Q do not interpolate$, @, %, &) has a role in triggering interpolation$ may just be used ‘as-is‘ followed by your variable identifier of course@ with [], % with {} or <>, & with (){} are used to any embed code within interpolated text – just like a block\Comparisons:

print(f"Hello, {name}") # Pythonprintf("Hello, %s\n", name); # Cecho "Hello, $name\n"; # PHPputs "Hello, #{name}" # Rubyconsole.log(`Hello, ${name}`); # JavaScriptsay "Hello, $name"; # Raku

To read further, checkout the Q-lang in the Raku docs.

Your contribution is welcome, please make a gist and share via the #raku channel on IRC or Discord.

Congrats to the core team for driving a steady release cadence. It’s very encouraging to see the super long list of RakuAST items … can it be that far to go?

Sorry about the Parrot joke.

Please keep staying safe and healthy, and keep up the good work! Even after week 69 of hopefully only 209.

~librasteve

Раку пакетът “DSL::Examples“, [AAp1], е „даннов пакет“ с примери за превод на DSL команди към програмен код.

Послучай 24-ти май аз реших да обогатя този пакет с примери на български и руски.

DSL примерите в пакета са подходящи за LLM few-shot обучение. Подпрограмата llm-example-function, предоставена от “LLM::Functions“, [AAp3], може ефективно да се използва за създаване на функции за превод, използващи тези примери.

Използването на такива LLM-функции за превод е показано по-долу. Също и в презентацията “Robust LLM pipelines (Mathematica, Python, Raku)“, [AAv1]:

Подобни преводи — с много по-малко изчислителни ресурси — се постигат с граматично-базирани DSL преводачи; вижте

“DSL::Translators“, [AAp2]. Пакетът “LLM::Resources“, [AAp4], съдържа LLM-графи за генериране на код, които използват DSL примерите от този пакет.

Получаване на всички примери:

use DSL::Examples;use Data::TypeSystem;dsl-examples() ==> deduce-type()

# Assoc(Atom((Str)), Tuple([Assoc(Atom((Str)), Tuple([Assoc(Atom((Str)), Atom((Str)), 17), Assoc(Atom((Str)), Atom((Str)), 14), Assoc(Atom((Str)), Atom((Str)), 32), Assoc(Atom((Str)), Atom((Str)), 20), Assoc(Atom((Str)), Atom((Str)), 20), Assoc(Atom((Str)), Atom((Str)), 27), Assoc(Atom((Str)), Atom((Str)), 6)]), 7), Assoc(Atom((Str)), Tuple([Assoc(Atom((Str)), Atom((Str)), 10), Assoc(Atom((Str)), Atom((Str)), 26), Assoc(Atom((Str)), Atom((Str)), 17), Assoc(Atom((Str)), Atom((Str)), 20)]), 4), Assoc(Atom((Str)), Tuple([Assoc(Atom((Str)), Atom((Str)), 15), Assoc(Atom((Str)), Atom((Str)), 23), Assoc(Atom((Str)), Atom((Str)), 33), Assoc(Atom((Str)), Atom((Str)), 20)]), 4), Assoc(Atom((Str)), Tuple([Assoc(Atom((Str)), Atom((Str)), 15), Assoc(Atom((Str)), Atom((Str)), 10), Assoc(Atom((Str)), Atom((Str)), 20), Assoc(Atom((Str)), Atom((Str)), 6)]), 4)]), 4)

Табулиране на всички езици за превод и наличните примери за работни потоци:

use Data::Translators;dsl-examples(from => 'English').map({ $_.key X $_.value.keys }).flat(1).map({ <language workflow> Z=> $_ })».Hash.sort.Array==> to-dataset()==> to-html(field-names => <language workflow>)

| language | workflow |

|---|---|

| Python | LSAMon |

| Python | QRMon |

| Python | SMRMon |

| Python | pandas |

| R | DataReshaping |

| R | LSAMon |

| R | QRMon |

| R | SMRMon |

| Raku | DataReshaping |

| Raku | LSAMon |

| Raku | SMRMon |

| Raku | TriesWithFrequencies |

| WL | ClCon |

| WL | DataReshaping |

| WL | LSAMon |

| WL | QRMon |

| WL | SMRMon |

| WL | Tabular |

| WL | TriesWithFrequencies |

Обърнете внимание, че в dsl-examples се задава езикът, от който се превежда. В момента пакетът съдържа DSL примери за български, английски, португалски и руски език (като изходни езици).

Получаване на примерите за сегменти от монадични конвейери за латентен семантичен анализ (LSA) в Python:

dsl-examples('Python', 'LSAMon') ==> deduce-type(:tally)

# Assoc(Atom((Str)), Atom((Str)), 15)

Създаване на LLM функция-пример за превод на команди за изграждане на LSA конвейр:

use LLM::Functions;my &llm-pipeline-segment = llm-example-function(dsl-examples()<WL><LSAMon>);

Изпълнение на LLM функцията върху списък от DSL команди:

my @commands = "use the dataset aAbstracts","make the document-term matrix without stemming","exract 40 topics using the method non-negative matrix factorization","show the topics";@commands.map({ .&llm-pipeline-segment }).map({ .subst(/:i Output ':'?/):g }).join("⟹\n")

# LSAMonUnit[aAbstracts]⟹# LSAMonMakeDocumentTermMatrix["StemmingRules"->{},"StopWords"->Automatic]⟹# LSAMonExtractTopics["NumberOfTopics" -> 40, Method -> "NNMF"]⟹# LSAMonEchoTopicsTable[]

Същият работен поток, зададен на български:

my &llm-pipeline-segment-bg = llm-example-function(dsl-examples(from => 'Bulgarian')<WL><LSAMon>);my @commands = "използавай данните aAbstracts","направи документ-терм матрицата без да използаваш стъблата на думите","намери 40 теми ползвайки метода не-отрицателна матрична факторизация","покажи темите";@commands.map({ .&llm-pipeline-segment-bg }).map({ .subst(/:i Output ':'?/):g }).join("⟹\n")

# LSAMonUnit[aAbstracts]⟹# LSAMonMakeDocumentTermMatrix["StemmingRules"->{}]⟹# LSAMonExtractTopics["NumberOfTopics"->40,Method->"NNMF"]⟹# LSAMonEchoTopicsTable[]

Съществуват няколко начина за организиране на DSL примерите по отношение на изходните езици:

| Тип | Коментар | Използва се в момента |

|---|---|---|

| Отделен файл за всеки изходен език | Удобно редактиране и усъвършенстване | Да |

| Един файл с всички примери; изходният език е ключ за всеки работен поток | Може да бъде генериран от отделните файлове | Не |

| Запазване само на английски DSL примери и използване на речници за превод на командите към английски | Не обучава директно LLM с изходния език | Речниците се пазят за справка |

Тази тетрадка на Jupyter има процедура за превода на английските примери към други езици.

[AAp1] Anton Antonov, DSL::Examples Raku package, (2024-2026), GitHub/antononcube.

[AAp2] Anton Antonov, DSL::Translators Raku package, (2020-2026), GitHub/antononcube.

[AAp3] Anton Antonov, LLM::Functions Raku package, (2023-2026), GitHub/antononcube.

[AAp4] Anton Antonov, LLM::Resources Raku package, (2026), GitHub/antononcube.

[AAv1] Anton Antonov, Robust LLM pipelines (Mathematica, Python, Raku), (2024), YouTube/AAA4prediction.

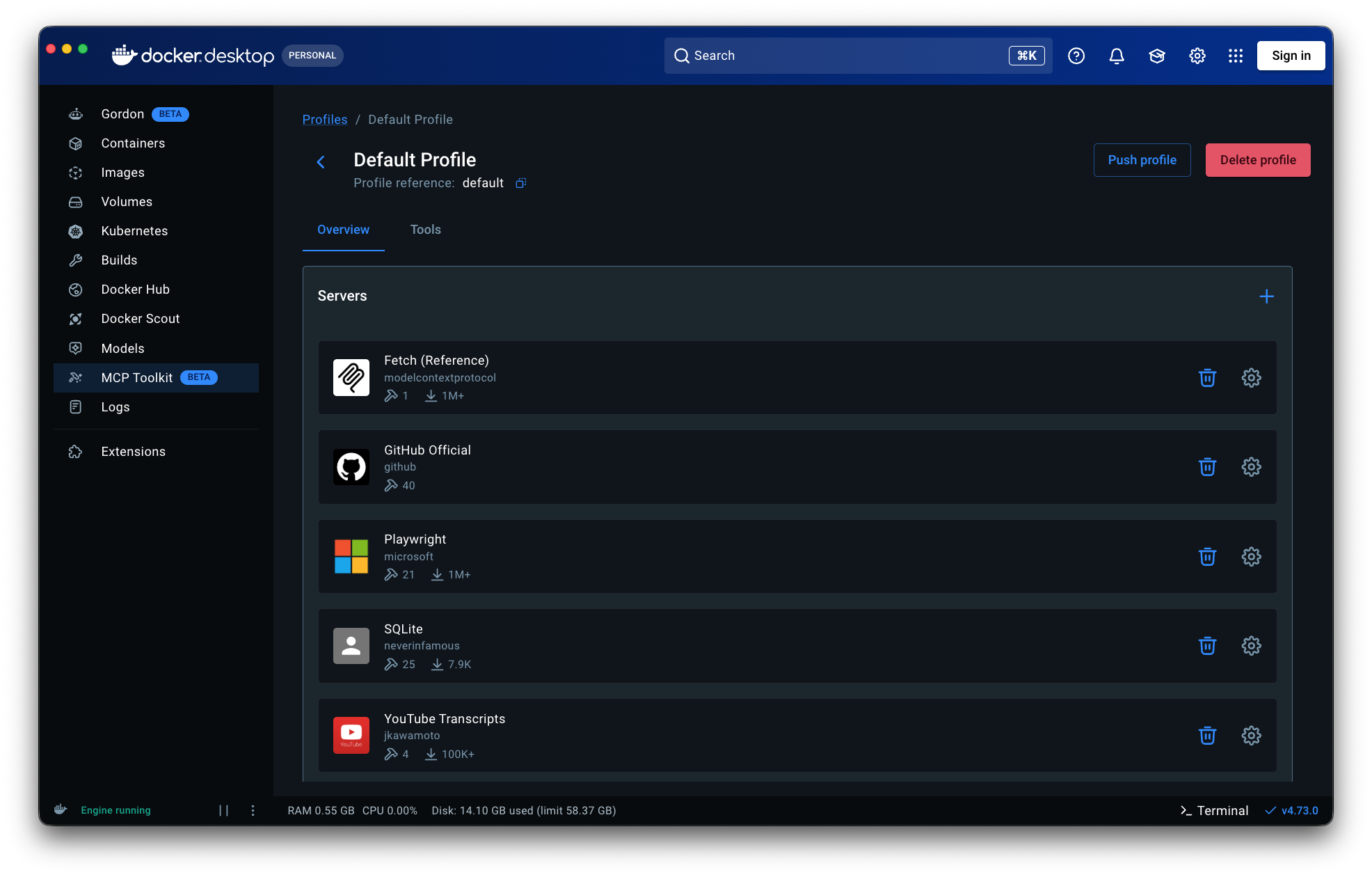

This document (notebook) has a complete usage example of a thin Model Context Protocol (MCP) client of the Raku package “MCP::Client”, [AAp1]. The MCP server is run in Docker — see “Docker MCP Toolkit”.

“MCP::Client” provides the class MCP::Client which creates from MCP server’s methods LLM::Tool objects that can be used with Raku’s Large Language Model (LLM) framework implemented with “LLM::Functions”, “LLM::Prompts”, “Text::SubParsers”; see [AA3÷6, AAp1÷3].

Because the client is thin and its implementation concise, it is designed to allow both (i) quick, “on-the-spot” MCP utilization and (ii) easy understanding of MCP principles.

Remark: A similar workflow based on a “simple” Python MCP server is given in the file “MCP-client-demo.raku” and corresponding notebook “Thin-MCP-client-demo.ipynb”.

Remark: The Wolfram Language (WL) paclet “MCPClient”, [AAp2], has the same mission of the (Raku) package “MCP::Client”, but WL’s “MCPClient” uses a Functional Programming implementation instead of an Object-Oriented Programming one (as “MCP::Client” does.)

Load the packages used below.

use LLM::Functions;use LLM::Tooling;use LLM::Prompts;use Text::SubParsers;use Data::Translators;use Data::Importers;use JSON::Fast;use MCP::Client;

Get a Docker profile file and show profile’s name and identifier:

my $profile = data-import("https://raw.githubusercontent.com/antononcube/WL-MCPClient-paclet/refs/heads/main/Resources/default_docker_profile.json");$profile<name id>.raku

# ("Default Docker Profile", "default-docker-profile")

Import the profile (to Docker):

my $profileFile = $*TMPDIR ~ '/dockerProfile.json';spurt($profileFile, to-json($profile));my @cmd = "/usr/local/bin/docker", "mcp", "profile", "import", $profileFile;my $proc = run @cmd, :out, :err;$proc.out.slurp(:close).say;$proc.err.slurp(:close).say;

# Imported profile default-docker-profile

Set the PATH variable (MacOSX in this example):

for </usr/local/bin /usr/local/share/bin /usr/local/sbin> { %*ENV<PATH> = $_ ~ ':' ~ %*ENV<PATH> unless %*ENV<PATH>.contains($_)}

Setup MCP server process creation command elements:

sink my @cmd = 'docker', 'mcp', 'gateway', 'run', '--profile', $profile<id>

Create the MCP client object:

my Bool:D $echo = False;my Numeric:D $sleep = 5;my $client = MCP::Client.new(:$echo, :$sleep);sink $client.start(@cmd);

Initialize the client:

$client.initialize;

# True

Instead of using the Docker profile ingestion and loading, using Docker’s dashboard a default MCP Toolkit profile can be made and the just used the command docker mcp gateway run. Here is how such default profile looks like:

Get the MCP server tools list:

my @mcp-tools = |$client.list-tools();@mcp-tools.elems

# 75

Randomly pick some tools and make a table of their tool records using only names and descriptions:

#% html@mcp-tools.pick(5).sort(*<name>)==> to-html(field-names => <name description>, align => 'left')

| name | description |

|---|---|

| browser_fill_form | Fill multiple form fields |

| browser_select_option | Select an option in a dropdown |

| create_repository | Create a new GitHub repository in your account or specified organization |

| get_commit | Get details for a commit from a GitHub repository |

| get_latest_release | Get the latest release in a GitHub repository |

Show tools of the official GitHub MCP server:

#% html@mcp-tools.grep(*<description>.contains('GitHub',:i)) ==> to-html(field-names => <name description>, align => 'left')

| name | description |

|---|---|

| add_issue_comment | Add a comment to a specific issue in a GitHub repository. Use this tool to add comments to pull requests as well (in this case pass pull request number as issue_number), but only if user is not asking specifically to add review comments. |

| assign_copilot_to_issue | Assign Copilot to a specific issue in a GitHub repository.This tool can help with the following outcomes:a Pull Request created with source code changes to resolve the issueMore information can be found at:https://docs.github.com/en/copilot/using-github-copilot/using-copilot-coding-agent-to-work-on-tasks/about-assigning-tasks-to-copilot |

| create_branch | Create a new branch in a GitHub repository |

| create_or_update_file | Create or update a single file in a GitHub repository. If updating, you should provide the SHA of the file you want to update. Use this tool to create or update a file in a GitHub repository remotely; do not use it for local file operations.In order to obtain the SHA of original file version before updating, use the following git command: git rev-parse <branch>:<path to file>SHA MUST be provided for existing file updates. |

| create_pull_request | Create a new pull request in a GitHub repository. |

| create_repository | Create a new GitHub repository in your account or specified organization |

| delete_file | Delete a file from a GitHub repository |

| fork_repository | Fork a GitHub repository to your account or specified organization |

| get_commit | Get details for a commit from a GitHub repository |

| get_file_contents | Get the contents of a file or directory from a GitHub repository |

| get_latest_release | Get the latest release in a GitHub repository |

| get_me | Get details of the authenticated GitHub user. Use this when a request is about the user’s own profile for GitHub. Or when information is missing to build other tool calls. |

| get_release_by_tag | Get a specific release by its tag name in a GitHub repository |

| get_tag | Get details about a specific git tag in a GitHub repository |

| issue_read | Get information about a specific issue in a GitHub repository. |

| issue_write | Create a new or update an existing issue in a GitHub repository. |

| list_branches | List branches in a GitHub repository |

| list_commits | Get list of commits of a branch in a GitHub repository. Returns at least 30 results per page by default, but can return more if specified using the perPage parameter (up to 100). |

| list_issues | List issues in a GitHub repository. For pagination, use the ‘endCursor’ from the previous response’s ‘pageInfo’ in the ‘after’ parameter. |

| list_pull_requests | List pull requests in a GitHub repository. If the user specifies an author, then DO NOT use this tool and use the search_pull_requests tool instead. |

| list_releases | List releases in a GitHub repository |

| list_tags | List git tags in a GitHub repository |

| merge_pull_request | Merge a pull request in a GitHub repository. |

| pull_request_read | Get information on a specific pull request in GitHub repository. |

| push_files | Push multiple files to a GitHub repository in a single commit |

| request_copilot_review | Request a GitHub Copilot code review for a pull request. Use this for automated feedback on pull requests, usually before requesting a human reviewer. |

| search_code | Fast and precise code search across ALL GitHub repositories using GitHub’s native search engine. Best for finding exact symbols, functions, classes, or specific code patterns. |

| search_issues | Search for issues in GitHub repositories using issues search syntax already scoped to is:issue |

| search_pull_requests | Search for pull requests in GitHub repositories using issues search syntax already scoped to is:pr |

| search_repositories | Find GitHub repositories by name, description, readme, topics, or other metadata. Perfect for discovering projects, finding examples, or locating specific repositories across GitHub. |

| search_users | Find GitHub users by username, real name, or other profile information. Useful for locating developers, contributors, or team members. |

| sub_issue_write | Add a sub-issue to a parent issue in a GitHub repository. |

| update_pull_request | Update an existing pull request in a GitHub repository. |

Make LLM::Tool objects:

my @tools = @mcp-tools.grep({ $_<description> ~~ /:i GitHub | YouTube/ }).map({ $client.to-llm-tool($_) });deduce-type(@tools)

# Vector((Any), 35)

Tools without properties:

.say for |@mcp-tools.grep({ !$_.<inputSchema><properties> }).map(*<name description inputSchema>)

# (browser_close Close the page {$schema => https://json-schema.org/draft/2020-12/schema, additionalProperties => False, properties => {}, type => object})# (browser_navigate_back Go back to the previous page in the history {$schema => https://json-schema.org/draft/2020-12/schema, additionalProperties => False, properties => {}, type => object})# (get_me Get details of the authenticated GitHub user. Use this when a request is about the user's own profile for GitHub. Or when information is missing to build other tool calls. {properties => {}, type => object})

Make a LLM access configuration with the tools:

my $conf = llm-configuration('ChatGPT', model => 'gpt-5.3-chat-latest', :@tools, :8192max-tokens);

# LLM::Configuration(:name("chatgpt"), :model("gpt-5.3-chat-latest"), :module("WWW::OpenAI"), :max-tokens(8192))

Find the un-accepted GitHub Pull Requests (PRs) — using the tool “list_pull_requests”:

#% markdownmy $res = llm-synthesize( "Which of my -- antononcube -- GitHub pull requests are pending?", e => $conf);

Create an LLM function that is assumed to invoke the GitHub MCP server tool “list_branches”:

sink my &fGHB = -> $repo { llm-synthesize([ "Give the branches of the GitHub repo:", $repo, 'Separate the features from the bugfixes', llm-prompt('NothingElse')('JSON') ], e => $conf, form => sub-parser('JSON'):drop )}

Remark: Currently, llm-function of “LLM::Functions”, [AAp3], does not support the use of LLM-tools, hence the “block-with-LLM-synthesis” definition.

Get branches data from a repository:

sink my $res = &fGHB('bduggan/raku-jupyter-kernel');

#% html$res».subst(/ [ bugfix | feature ] '/' /) ==> to-html(align=>'left')

| bugfixes | trap_sigintwork-around-tty-issue |

|---|---|

| features | add_some_messagesadd-workflowsbetter_launcherdetermine_versionimplement_comm_info_requestmore_featuresraku-renamerename_executableupdate-readme |

Using a tool of a different MCP server:

# my $url = 'https://www.youtube.com/watch?v=-QtIVv-oz5Y';# my $res = llm-synthesize(# "Get you get the transcript of this URL: $url",# e => $conf);

Kill the MCP client process:

$client.kill;

[AA1] Anton Antonov, “LLM function calling workflows (Part 1, OpenAI)”, (2025), RakuForPrediction at WordPress.

[AA2] Anton Antonov, “LLM function calling workflows (Part 2, Google’s Gemini)”, (2025), RakuForPrediction at WordPress.

[AA3] Anton Antonov, “LLM function calling workflows (Part 3, Facilitation)”, (2025), RakuForPrediction at WordPress.

[AA4] Anton Antonov, “LLM function calling workflows (Part 4, Universal specs)”, (2025), RakuForPrediction at WordPress.

[AAp1] Anton Antonov, MCP::Client, Raku package, (2026), GitHub/antononcube.

[AAp2] Anton Antonov, MCPClient, Wolfram Language paclet, (2026), Wolfram Language Paclet Repository.

[AAp3] Anton Antonov, LLM::Functions, Raku package, (2023-2026), GitHub/antononcube.

[AAp4] Anton Antonov, LLM::Prompts, Raku package, (2023-2026), GitHub/antononcube.

[AAp5] Anton Antonov, Text::SubParsers, Raku package, (2023), GitHub/antononcube.

The https://slangify.org site was made as a way to illustrate the benefits of Raku as a DSL tool.

DSLs are a secret weapon for LLM effectiveness because their human-readable, domain-centric structure constrains both the training set and model outputs, making them significantly easier for LLMs to generate accurately.

Thanks to Fernando Correa de Oliveira for his very cool work on Grammar::Editor and enabling the Playground. This has the promise to be a key tool for DSL creators to share with less technically oriented colleagues (such as accountants who want to leverage AI).

I wrote a simple Invoice DSL to help showcase Raku Grammars for this domain. Please grab your keyboard, spin up your favorite LLM (if that’s how you roll) and help to expand the picture – any actual business domain and specifically, it would be great to see:

If you have a DSL project to share on the Examples or Ecosystem page, please let us know – it would be great to showcase your work here.

Don’t Miss the Perl and Raku Conference 2026 in Greenville, SC, USA

SAVE THE DATES! Friday through Sunday, June 26-28

Registration is open: https://tprc.us/tprc-2026-gsp

Weekly Challenge #374 is available for your bemusement.

I saw an exchange on IRC/Discord chat recently, went something like:

I have this…

sub Baz ($a, :$b!, :$c = 'C') { say "$a$b$c" }

Baz('A',:b('B')); #ABC

…now I’d like Foo() to simply pass its args to Baz(), something like…

sub Foo(?) { Baz(|c) }

Foo('A',:b('B')); #want ABC

…and, sure enough, came an answer.

sub Foo(|c) { Baz(|c) }

Foo('A',:b('B')); #ABC

In English, that means (i) the c is just an identifier, |c represents capturing all arguments into a Capture in the Foo signature, (iii) in the call to Bar(), |c represents flattening all args in the Capture as args in the call.

Bingo!

[lucs & lizmat to thank for the excellent question and answer]

Your contribution is welcome, please make a gist and share via the #raku channel on IRC or Discord.

It has been a ride this last week to make the https://slangify.org site. Thanks for all the support to those involved and an interesting learning curve in getting to grips with Claude Code. Hopefully, this will hope to interest non-Raku coders to try our language and enjoying some of the -Ofun.

Please keep staying safe and healthy, and keep up the good work! Even after week 68 of hopefully only 209.

~librasteve

Post Image: Art of Failure by XoMEoX, CC BY 2.0 https://creativecommons.org/licenses/by/2.0, via Wikimedia Commons

Avuserow tells us that Raku’s Failures are a Great Success while bathed in a beautiful retro orange glow.

Raku has failures, a type of delayed exceptions. In try blocks or if you use it (e.g. by calling a method on it), it throws an exception. If you store it as a value, it’s an undefined value (which will throw an exception if you use it without handling it).

This is a great compromise that works with try/CATCH in most code and also allows for ergonomic small scripts (or one liners)….

Fernando Correa de Oliveira teases us with a Grammar Playground built on Selkie UI. An impressive application of the recently released Selkie TUI framework by Matt Doughty. Looking forward to the release…

Yours truly continues to pour DieSeL (DSL = Domain Specific Languages – geddit?) on the Raku fire. This time I pose the question Why Raku Grammars? I try to mimic a typical Python [1] coder’s experience when they want a DSL:

And then to see how that compares to Raku Grammars in terms of ease of use and code maintainability. Aiming to showcase the unique Raku capabilities that make it worth the pain of switching to a new tool i.e. “Why Switch to Raku?” If you don’t want to read too many words, then eyeball the side-by-side results for an instant impression.

[1] I chose Python as the comparison since I guess there are 1000s Python coders who need to make a DSL … would encourage other to do this for other popular languages – Go, TS, Rust and so on – and share your results.

Don’t Miss the Perl and Raku Conference 2026 in Greenville, SC

SAVE THE DATES! Friday through Sunday, June 26-28

Registration is open: https://tprc.us/tprc-2026-gsp

Weekly Challenge #373 is available for your fortification.

I joined the Raku Study Group yesterday and enjoyed hanging out with some keen Raku coders. Bruce Gray has been an inspiration to many of us with his very thorough, patient and passionate explanations of many of Raku’s killer features. I highly recommend his Raku for Beginners Part 1 and Part 2.

One items that struck me at the time and had lasting impact was his summary of the “cat’s ears” Range demarcation syntax (minute 31 of Part2 to hear it from the horse’s mouth):

say 1 .. 5; #1..5

say (1 .. 5).WHAT; #(Range)

say |(1 .. 5); # 12345

say |(1 ^.. 5); # 2345

say |(1 ..^ 5); # 1234

say |(1 ^..^ 5); # 234

say |( ^5); #01234 (short for 0..^5)

I think that table says it. Reproduced here with Bruce’s kind permission.

Your contribution is welcome, please make a gist and share via the #raku channel on IRC or Discord.

Some interesting stuff this week … feels that there is a lot of -Ofun to be had with Selkie and Selkie::UI. I feel more will be coming soon. Tune in next time.

Please keep staying safe and healthy, and keep up the good work! Even after week 67 of hopefully only 209.

~librasteve

In the last episode, I decided to make a DSL in both Python and Raku using Claude Code. If you want to know why there’s Python in a Raku blog – then check out the previous post.

I asked Claude to define a simple Invoice Domain Specific Language (DSL). The result was ideal for our purpose, in that it is a very simple, yet typical DSL formulation.

Here is the example DSL source code:

invoice INV-001 date 2026-04-29 client "Acme Corp" item "Website redesign" hours 10 rate 150 item "Hosting setup" hours 2 rate 100 tax 8%

And here, the target formatted output:

Invoice: INV-001Date: 2026-04-29Client: Acme CorpDescription Hours Rate Subtotal----------------------------------------------------------Website redesign 10.0 150.00 1500.00Hosting setup 2.0 100.00 200.00---------------------------------------------------------- Subtotal 1700.00 Tax (8%) 136.00 Total 1836.00

Since the full code is quite lengthy for a Blog post, I have made a simple web-page view of the two options side-by-side. Suggest you crank that open in a new window and view it alongside.

As anticipated, Claude worked very smoothly to create a Python implementation. In fact, it proposed two options:

I chose the latter as this seemed a better like-for-like comparison with Raku and it’s built-in support for Grammars.

Frankly, I expected Claude to be less adept at generating the Raku solution. To my pleasant surprise, Raku was on a par with Python in terms of the code quality. 10/10 to Claude for this.

Claude was also easy to direct to follow my preferred options for setting up a new Raku project. In this case, using App::Mi6 and IntelliJ with custom .gitignore , dist.ini and README.md copyright.

Here is the conclusion of the comparison:

I would rather inherit (2) because:

(1) feels like “practical glue code around a parser.”

(2) feels like “a coherent language implementation.”

That usually translates to better long-term maintainability.

Read on for the detailed analysis…

The grammar is compact, but several rules rely on positional interpretation:

field_line: "date" DATE| "client" ESCAPED_STRING

Then later:

token = tokens[0]if token.type == "DATE": return {"date": …}else: return {"client": …}

This means the meaning of a parsed token depends on inference after parsing rather than being explicit in the grammar.

It also uses underscore-prefixed helper tokens (_NL, _WS) and a packed grammar string, which is functional but less self-documenting.

The grammar names captures directly:

rule field-line {

| date <date>

| client <client=quoted>

| tax <tax-rate=number> '%'

}

That is clearer because the parse structure already communicates intent. Someone reading it can understand the DSL without needing to inspect later transformation code.

(2) is significantly clearer.

Uses dataclasses cleanly:

dataclassclass Invoice:

This is nice and concise. Computed properties like subtotal, tax, total are easy to understand.

However, items: list = field(default_factory=list) is weakly typed compared with a specific list element type.

Also, inv.dict.update(item) inside the transformer bypasses encapsulation and makes mutation less explicit.

The object model is more explicit:

has Item @.items;

has Real $.tax-rate is rw = 0.0;

Attribute constraints and intent are clearer. The transformation hook:

method transform(Str $attr, $raw) { ... }

centralizes input normalization.

(2) has the cleaner model, though (1)’s dataclasses are pleasantly concise.

Transformation logic depends on runtime type checks:

if isinstance(item, dict):elif isinstance(item, Item):

and token positions:

desc = str(tokens[0])hours = float(tokens[1])rate = float(tokens[2])

This creates maintenance risk: if grammar changes, positional indexes can silently break.

The actions layer constructs objects from named captures:

$inv.action($_) for $;

$inv.items.push(Item.action($_)) for $;

This is less brittle because it relies more on semantic names than array positions.

(2) is easier to evolve safely.

Some surprising elements:

ambiguity="resolve"text.strip() + "\n"__dict__These are maintainability smells because future readers must ask why they are necessary.

The code feels more internally consistent: grammar captures feed actions which populate typed objects.

There is some syntactic density, but less hidden workaround logic.

(2) has fewer surprises.

(2) – grammar and object mapping read like the DSL itself

(1) – readable overall, but transformation logic is more indirect

(2) – stronger separation of concerns, named captures, less brittle

(1) – workable, but more dependent on token order and ad hoc mutation

While I generally prefer to write my own posts, here I invited ChatGPT to compare both examples.

compare the legibility and ease of maintenance for this code (do not include aspects about the popularity or maturity of the language, confine analysis to the code examples

I feel that the AI result is a well reasoned and well structured and fair report. TBH I would have been more biased and less expert had I tried to do this… I endorse it.

While some folk dislike head to head comparisons of programming languages, I feel that it is a necessary step to properly understand the relative technical benefits of a language with built-in support for Grammars and DSLs vs. the use of an external library such as Lark despite the maturity and popularity of Python.

I hope that this analysis of Raku in a simple, but realistic example has been a pleasant surprise and that this helps to explain the enthusiasm of our community. No change is without risk, but we are convinced that the benefits of switching to Raku for DSL applications are compelling and invite you to give it a serious evaluation for your next DSL project.

~librasteve

= > Next

After several weeks in clean up and exit mode, I have now “finished” my “work” on both Crag and Air.

<aside>With Crag I wanted to get to a point where I could at least show something and share to the wider world. With Air, I have been keen to address the feedback from [Coke] – with a refactor to provide stubs and to embrace the soon-to-be-released Air::Plugin::RakuDoc via a collaboration with finanalyst.</aside>

<aside>Perhaps later I will return to Crag – either as a Selkie app, or as a Slang or both. I will definitely continue to build on Air, that is generally “demand driven” so if you want a feature, please ask!</aside>

Around the turn of the year, I made a resolution: to engage and attract 1000 more Raku coders. I believe that the racehorse to back is called DieSeL (thats a backcronym for DSL aka Domain Specific Languages aka Slangs).

Picking a direction is the hardest thing.

OK, so the challenge is:

Convince a bunch of developers that Raku is a new, improved way to make a DSL

<aside>Several times – at conferences and so on – people have asked me “what is Raku?” or “how is Raku different from [Python|PHP|JS|Rust…] ” and I have struggled to explain. Currently https://raku.org says Raku is an expressive, multi‑paradigm, Open Source language that works the way you think! Well, sure. But this doesn’t explain “what is it good for?”, “who uses it and why?”. So I believe that having a clear horse helps to crystallise this.</aside>

Since that is hopefully clear, we can move to:

So, in thinking about the best way to start to make some examples and some materials, I decided to try making a DSL in Raku and to compare doing similar in Python.

I choose Python since that is a very large community in a very mature (and rich) ecosystem and that showing how Raku compares to something familiar would interest and attract some new recruits. In many ways, Python is of course similar in intent and position to Raku:-

On the last one, you can kind of write Raku in “Python-style” that is not too intimidating for less geeky folks.